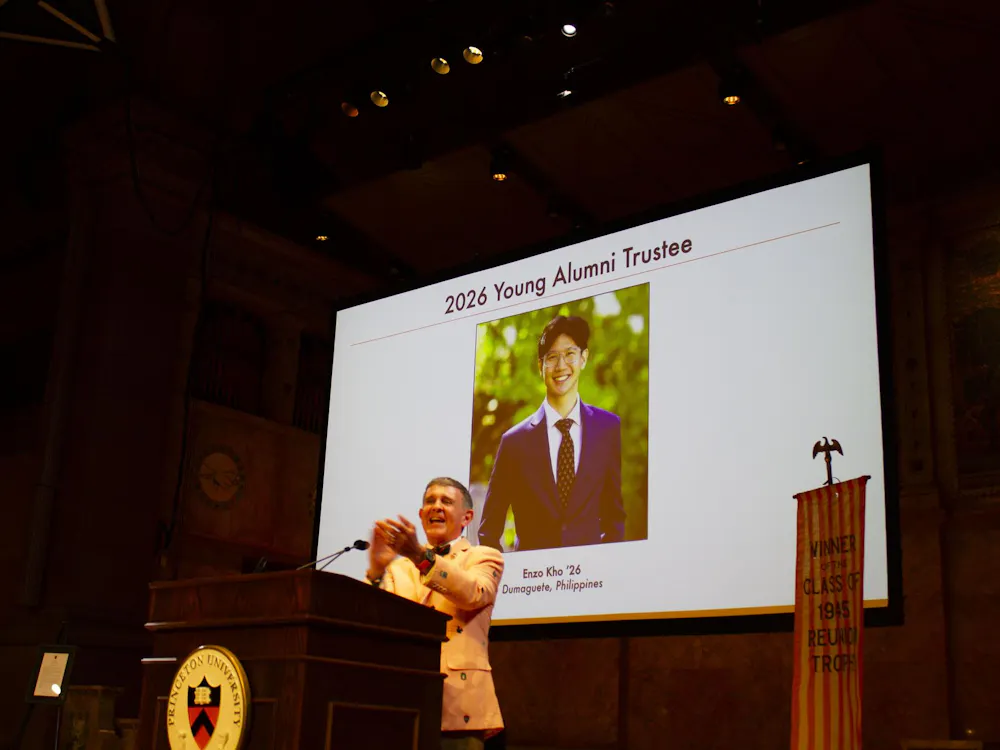

In an optional lecture delivered to students enrolled in COS 126: Computer Science: An Interdisciplinary Approach, New York Law School Professor Ari Waldman discussed how engineers typically view data privacy and where he believes that conversation can be improved.

Waldman, a professor of law and director of the Innovation Center for Law and Technology at New York Law School and affiliate fellow of the Information Society Project at Yale Law School, discussed privacy issues in the technology industry and answered student questions during the 40-minute talk on Nov. 12. Students present at the talk said they saw value in bringing this discussion up early in their computer science education.

The talk was delivered in conjugation with a series of COS 126 guest lectures.

Waldman began his lecture by walking through a previously written Daily Princetonian article on concerns related to Tigerbook in 2017 to display different interpretations of the definition of privacy.

Tigerbook is an online directory of University undergraduates, originally created as a capstone project for COS 333: Advanced Programming Techniques.

Waldman pointed out that based on the quotes included in the Tigerbook-related article, there were two major definitions of privacy, either focused on unauthorized access or confidentiality.

Waldman then defined “discourse” as how language is used to communicate power and spoke about how differences in the way different people define this discourse may open opportunities to misuse data.

Catering to an audience of COS 126 students, Waldman discussed the role of different privacy discourses in light of technology, where engineers and software developers have the power to decide if and how data is tracked and stored.

Relating back to the article, Waldman pointed out that the original mission of Tigerbook, according to one of its creators, was more focused on the optimization of efficiency and use, and not privacy.

He called this lack of concern for privacy a “discourse and education problem.”

Waldman went on to use the examples of Uber and Snapchat to show real companies acting negligently in how they handle privacy. Waldman described his discussions with engineers and computer scientists on their experience with privacy issues while designing.

“In very few cases, it’s this nefarious plot to ignore privacy issues that came up — most of the time it just never came up in the process,” he said.

He credited the problem to engineers and computer scientists coming out of highly ranked technology programs thinking about privacy in a very narrow-minded way, focused on secrecy and security.

Waldman believes that the issue comes from engineers integrating their own definitions of privacy into their products and that the problem requires thinking about the different ways people think about privacy.

According to Waldman, academics and researchers such as himself look at privacy with a broader definition. He mentioned that privacy can include autonomy, trust, intimacy, and social value.

He stated that incorporating a broader definition of privacy discourse into products will lead to more robust privacy protections and allow for a better system in spotting privacy-related issues.

Waldman used the example of revenge pornography to support the need for broader definitions of privacy discourse. In this case, if privacy discourse was solely focused on the aspect of “consent,” victims of revenge porn would have little rights, according to Waldman, because they consented to the photos being taken in the first place.

He then went to claim that if privacy discourse was defined based more on intimacy, revenge porn victims would have more rights because naked photos are inherently an intimate thing regardless of consent.

“Different definitions of privacy come with a different power dynamic,” he said “They allow for different types of opportunities to reclaim or not reclaim your privacy.”

Waldman pointed out that at the level of business, CEO and chief privacy officers often look at privacy from the eye of compliance, which severely narrows down the way in which privacy is looked at.

He closed his talk by saying that if developers focus solely on optimization and efficiency during the process, and leave privacy to the end, it will often be ignored.

Waldman then answered questions from the students who attended the lecture.

In his responses, he used Venmo as an example to claim that companies often make their terms of service difficult to understand or ways of opting out of certain forms of data collection more difficult to discourage users from trying to protect their own privacy.

He encouraged students to make privacy a part of their product and company missions. Waldman pointed out that the elimination of “friction” that comes from aggregating data into one system makes it easier to have privacy issues.

Students who attended the lecture said they saw importance in bringing these points up earlier in their educations.

“Addressing these questions earlier than later in our computer science education reminds engineers to incorporate privacy into their ‘list of things to think about’ before the data is inappropriately used or managed,” Hien Pham ’23, who is intending on concentrating in computer science, wrote in an email to The Daily Princetonian.

Dr. Jérémie Lumbroso, a professor of COS 126, faculty adviser for the developers of Tigerbook, and organizer of the talk, expressed his hopes to see more conversations related to privacy discourse in his department and others in an email to the ‘Prince.’

“I believe it's important to engage undergraduates early in both the promise, and responsibility of building technology … they are going to be the creators and the users of tomorrow,” Lumbroso wrote. “As creators, they need to have enough discernment to build products that are not going to jeopardize our established society and public discourse.”