“There are two components to the idea of conflict,” said Gen. John R. Allen (ret.), president of the Brookings Institution, in his opening remarks. “The nature of war and the character of war.”

“They exist in equilibrium,” he explained. Technology, which Allen identified with the character of war, helps achieve the best efficiency, outcome, and capability of the military, but often, “the technology outstrips the capacity of the human dimension” — the nature of war.

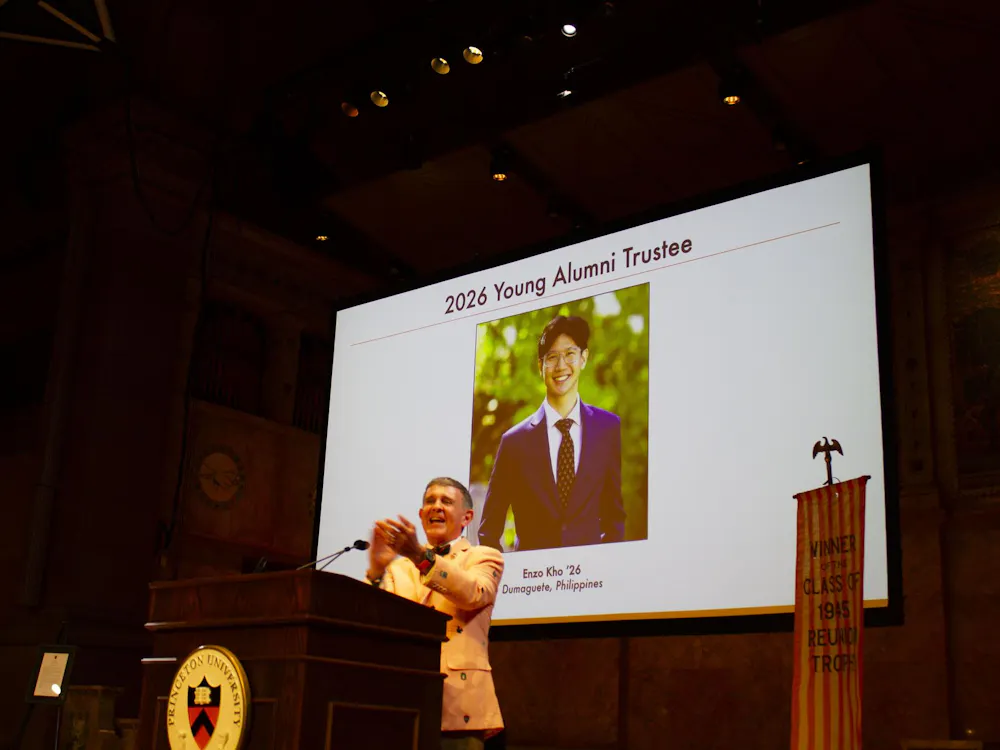

Therein lies the practical and ethical challenges when dealing with artificial intelligence (AI), which were explored and discussed in the lecture “AI on the Battlefield: Ethics and Rules of Engagement,” held in the Maeder Hall Auditorium on Wednesday, Oct. 2, as part of the G. S. Beckwith Gilbert ’63 Lecture Series. Edward W. Felten, professor of computer science and public affairs, moderated the talk. Felten held previous posts as the Chief Technologist for the Federal Trade Commission and the Deputy U.S. Chief Technology Officer under the Obama White House. Allen is a retired United States Marine Corps four-star general who served as the former commander of the NATO International Security Assistance Force and U.S. Forces in Afghanistan.

Felten described how there has been a huge commercial interest and huge amounts of commercial research in AI. For once, he said, “the military is not in the driver’s seat of the basic development of technology here.”

The reason for this, Allen explained, is because the nature of the technology is not clear. The “outer edges,” he said, are not fully understood, even among experts within the field.

His main concern, however, isn’t the fact that technology is changing but the “mind-boggling” rate at which that change is occurring. As such, it is crucial that the private sector — in relation to civil society, and thus, the military — to fully understand the process of resource development and deployment of capabilities when it comes to artificial technology.

Allen stressed the importance of applying this technology with the highest level of ethics we are capable of.

“This is a capability that has the capacity for great good,” he said, but also can be “applied with great destructiveness.”

Even so, the enormous benefits of AI are not lost on him. The supercomputing capabilities in society today has allowed for big data analytics, which would not have been remotely possible in the past.

Allen cited his own experiences in Afghanistan as demonstrative of the potential of AI, in the form of both integrative efforts as well as programmed innovation. In the field of human resource management, he said, AI can greatly reduce the time spent filtering through thousands of candidates to mere seconds in order to “put the right person in the right position at the right spot at the right time.”

AI can also save the military millions of dollars on supply discipline, Allen added. In one notable case, as Allen prepared to shrink the U.S. footprint in Afghanistan, he found 35,000 abandoned armored vehicles and 100,000 shipping containers full of supplies across 835 bases. Finding the supplies saved the military hundreds of millions of dollars, but the process had to be done manually across several months.

“If all of that had been monitored using artificial intelligence through geospatial analysis,” Allen claimed, “I could know instantly what was on every base and inside every container.”

Allen also highlighted potential benefits in combat situations. AI could allow for instantaneous diagnosis through evaluation of x-rays and analysis of blunt force organ trauma via wearable sensors. “As that individual is laid on the operating table in a surgical hospital, [we will] be completely ready to do what’s necessary to save that individual’s life.”

On the flip side, AI applications in Lethal Autonomous Weapons Systems (LAWS) are rife with ethical concerns. Allen and the U.S. military prescribe three laws of armed conflict when AI is involved: necessity to employ force, distinction (ability to discriminate between combatant/non-combatants and estimate collateral damage), and proportionality (that is, not applying any more force than is absolutely needed).

With this in mind, Allen stressed the distinction between automatic and autonomous systems.

The latter, as defined by the International Committee of the Red Cross and which Allen himself has embraced, has three additional requirements: the system must be able to be recalled, recognize if the necessity for force has passed, and clearly distinguish between combatants and non-combatants. Despite our desire for speed and efficiency, he said, we are still “nowhere near where all three things can be achieved by an autonomous system.”

“We as a nation — we as a people — have a military which is driven and guided by ethical principles," he added. “We don’t engage in the destruction of life lightly.”

Allen referred to the Department of Defense’s Directive 3000.09, written in 2012, as “one of the best articulations ever” on how the United States will handle autonomous systems. The salient requirement lies in the third enclosure: the system must incorporate “the necessary capabilities to allow commanders and operators to exercise appropriate levels of human judgment in the use of force.”

Felten highlighted the overall lack of international accountability and treaties regarding this technology and asked Allen to discuss the potential measures the United States can take to preserve integrity.

In the hands of rogue nations, this domain in the cyber environment is easily corrupted. Cyber intrusion allows for the corruption of databases — both overt and covert — and generative adversarial networks (GANs) can create advanced images and topographical maps with absolutely no basis in reality.

“When we talk about what artificial intelligence can do for us,” Allen explained, “we have to not just recognize that there are real military advantages, [but] there are real military liabilities if we don’t secure ourselves ... This is a very complex environment that we have to fully appreciate all the dynamics associated with.”

In the question-and-answer portion of the event, Allen elaborated on what he perceived the United States’ role as a global leader to be — something that far transcended the realm of artificial intelligence.

“First of all, as a nation, we have to lead,” Allen said. “To reassert our commitment to multilateralism, to reassert our commitment to human rights and humanitarian law, and to reassert our commitment to the community of our economic partners.”

He cited the United States’ recent withdrawals from “one U.N. agency after another” and various treaties as a “huge blow to the community of nations.”

“We can handle our differences we have with the Chinese. We can handle the Russians. We can handle the jihadists,” he said.

The one thing the U.S. cannot handle, though, is the climate. “It may be too late,” he added. “When the United States withdrew its leadership from the Paris Climate Accord, when the United States doesn’t show up to the report on sustainable development goals ... that’s the kind of challenge that is really existential if we’re not careful.”

Allen stressed the importance of reestablishing America as the recognizable beacon of liberal democracy it once was. “I’ve met many of the leaders of the countries whose forces I’ve [worked] with,” he explained. “They’re desperate for the reemergence of American global leadership.”

“Much of what the world has been able to achieve in the aftermath of the Cold War has been directly as a result of the United States’ leadership in partnership with similar countries. And in the absence of this partnership, there goes climate, there goes sustainable development goals, there goes the TPP [Trans-Pacific Partnership], we pulled out of the JCPOA [Iran nuclear deal] ... and we backed ourselves into a crisis in the Middle East in which we find ourselves embroiled in a war.”

“This requires American leadership,” Allen proclaimed. “And frankly, I don’t see much of it these days.”